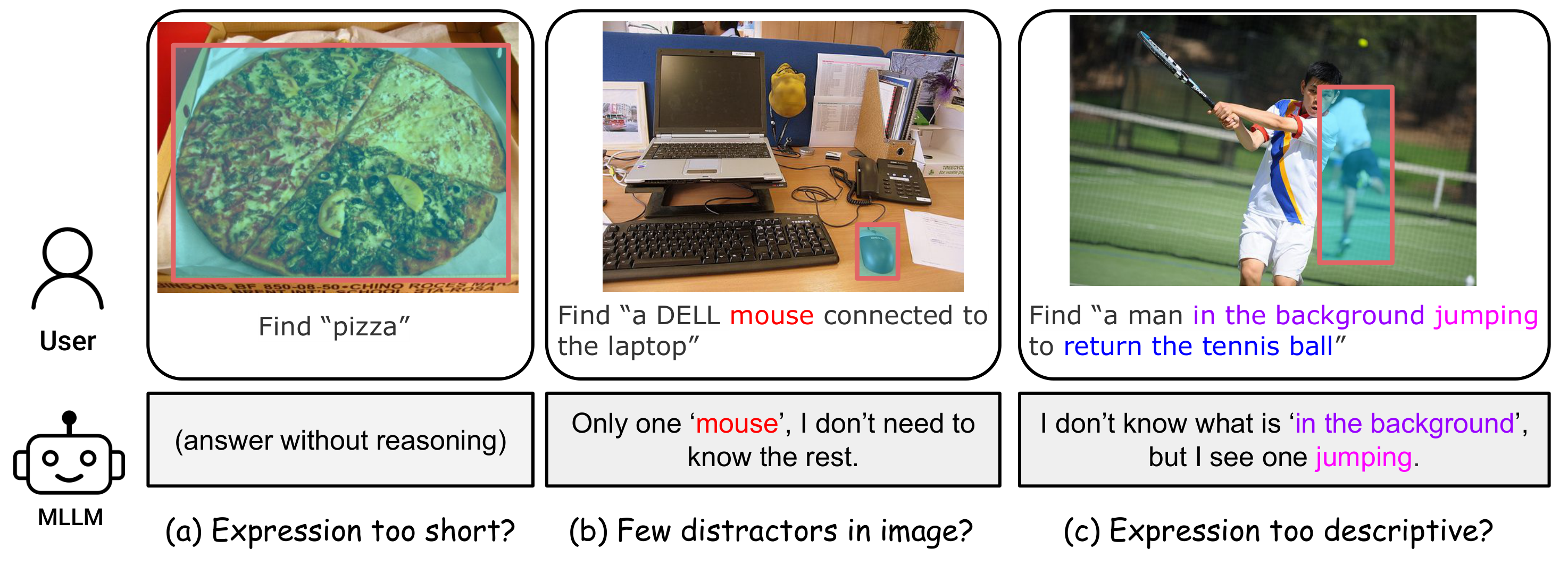

Referring Expression Comprehension (REC) links natural language to region-level visual perception—given an image and a text expression, the task is to localize the described object. Standard benchmarks such as RefCOCO, RefCOCO+, and RefCOCOg have driven years of progress, yet they harbor critical shortcuts:

- Expressions are too short (avg. ~3 words), leaving little reasoning demand.

- Few visual distractors make the target easy to find by elimination.

- Redundant descriptors let models latch onto a single cue and ignore the rest.

Ref-Adv is a modern REC benchmark designed to suppress these shortcuts. Every referring expression is paired with only the information necessary to uniquely identify the target among hard visual distractors. The dataset features an average expression length of 11.5 words, 4.01 distractors per image (each case contains at least 2 distractors), and a 21.25% negation ratio—substantially surpassing existing benchmarks in both linguistic and visual complexity.

We open source Ref-Adv-s with 1,142 cases, and have reproducible results with code on Qwen2.5-3.5 VL series models.